Overview

Mixture models provide a principled way to capture heterogeneity in data by positing that observations are generated from a combination of latent components. A classical example is the Gaussian mixture model, which serves as a flexible proxy for complex distributions. The same intuition has inspired mixture of experts architectures in machine learning, where multiple specialized predictors are combined under the guidance of a gating network [1,2].

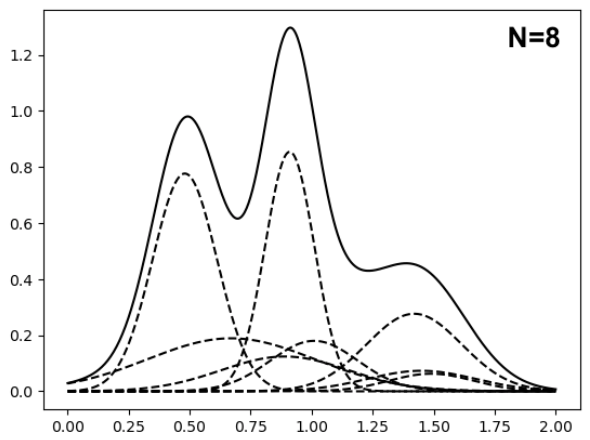

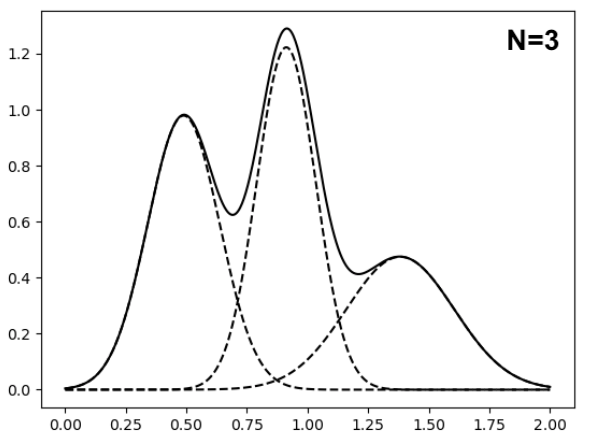

While mixture models are powerful, they can quickly become unwieldy as the number of components grows. For example, in sequential settings such as Bayesian filtering, mixture components can proliferate exponentially with time. For both computational and inferential reasons, it is therefore desirable to reduce the number of mixture components while still preserving the essential features of the underlying distribution. This task, known as mixture reduction (MR), seeks ways to approximate a large or growing mixture model with a simpler one that remains faithful to the data-generating structure (Figure 1).

Figure 1: Illustration of MR. Two Gaussian mixtures of different orders have similar shaped density functions (solid line). The dashed lines are component density functions.

Although MR has been extensively studied [3], existing approaches remain limited. Heuristic methods [4–8] are typically ad hoc and lack theoretical guarantees, while principled formulations [9,10] are often too computationally expensive for practical use.

Our approach: Optimal transport framework

To bridge this gap, we proposed a new perspective grounded in optimal transport [11]. Specifically, we define the MR estimate as the minimizer of a composite optimal transportation divergence between the candidate and original mixtures. To solve this optimization problem, we designed an iterative majorization–minimization algorithm that is both computationally efficient and comes with provable convergence guarantees. Our method is furthermore highly flexible, as the specific cost function used in the composite divergence can be tailored to the target problem and even to trade off running time and statistical accuracy.

Key paper: Zhang, Q., Zhang, A. G., and Chen, J. (2024). Gaussian Mixture Reduction With Composite Transportation Divergence. IEEE Transactions on Information Theory, 70(7), 5191–5212. Paper link

Application 1: Federated learning of finite mixture models

In federated learning of finite mixture models, massive heterogeneous data are partitioned across many clients. Local estimators must then be aggregated into a global model to achieve optimal efficiency. A major obstacle is label switching, which breaks naïve split-and-conquer strategies because component estimates on different clients are not aligned.

We addressed this challenge by averaging mixing distributions across clients and applying MR to project the result back into the correct parameter space [12]. The resulting estimator is:

- computationally efficient,

- achieves the optimal convergence rate, and

- is asymptotically equivalent to the global maximum likelihood estimator under standard assumptions.

To handle Byzantine failures, where some clients transmit corrupted information, we developed DFMR, a robust extension that retains both efficiency and statistical guarantees [13].

Key papers:

-

Zhang, Q. and Chen, J. (2022). Distributed Learning of Finite Gaussian Mixtures. Journal of Machine Learning Research, 23(1), 4265–4304. Paper link

-

Zhang, Q., Tan, Y. S., and Chen, J. (2026). Byzantine-Tolerant Distributed Learning of Finite Mixture Models. Journal of the Royal Statistical Society: Series B. Paper link

Application 2: 3D Gaussian splatting compaction

We further extended MR to tackle a critical computational bottleneck in 3D Gaussian Splatting (3DGS), a state-of-the-art technique for reconstructing 3D scenes from 2D images (see demo).

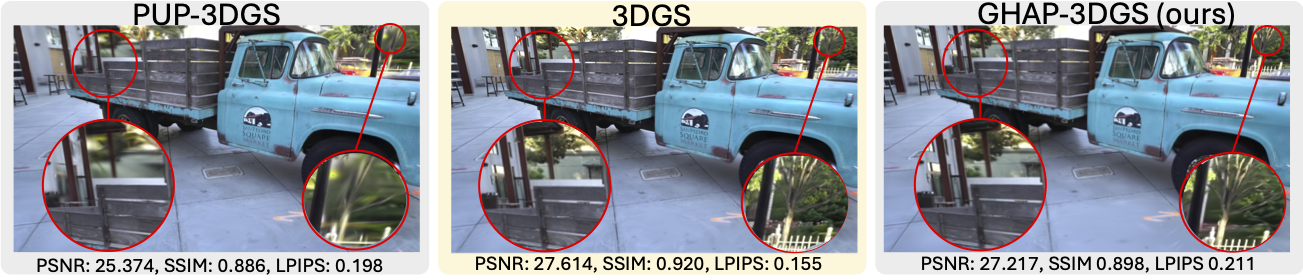

The widely used 3DGS algorithm [14] represents scenes with millions of Gaussians, each associated with a color function. While highly expressive, this representation introduces substantial redundancy, leading to prohibitive storage and rendering costs — particularly on mobile and AR/VR platforms. Existing compaction strategies [15–25] typically rely on pruning, which often sacrifices geometric fidelity and produces visible distortions.

In contrast, our approach [26] interprets 3DGS representations as Gaussian mixtures, with opacities corresponding to mixing weights. This perspective allows principled compaction via MR, naturally preserving geometric structure and avoiding distortion. For scalability, we designed a novel cost function that admits closed-form, low-cost updates, coupled with a block-wise algorithm guided by KD-trees to process large-scale scenes efficiently.

Our post hoc method is pipeline-agnostic and achieves state-of-the-art performance:

- Reduces the number of Gaussians by up to 90% while maintaining geometric accuracy (Figure 2).

- Outperforms pruning-based methods such as PUP-3DGS while remaining competitive with the original 3DGS representation.

To our knowledge, this represents the first application of MR to 3DGS, underscoring the broader potential of statistically grounded mixture reduction for efficient and scalable AI representations.

Figure 2: Visual comparison. When reducing the number of Gaussians by 90%, our method outperforms other compaction techniques, such as PUP-3DGS, and remains competitive with the original 3DGS.

Key paper: Wang, T., Li, M., Zeng, G., Meng, C., and Zhang, Q. (2025). Gaussian Herding across Pens: An Optimal Transport Perspective on Global Gaussian Reduction for 3DGS. NeurIPS Spotlight 2025. Paper link

References

- Shen, S. et al. (2024). Mixture-of-Experts Meets Instruction Tuning: A Winning Combination for Large Language Models. ICLR 2024, vol. 2024, pp. 18858–18884.

- Sukhbaatar, S. et al. (2024). Branch-train-mix: Mixing expert LLMs into a mixture-of-experts LLM. arXiv:2403.07816.

- Crouse, D. F., Willett, P., Pattipati, K., and Svensson, L. (2011). A look at Gaussian mixture reduction algorithms. 14th International Conference on Information Fusion, pp. 1–8. IEEE.

- Salmond, D. J. (1990). Mixture reduction algorithms for target tracking in clutter. SPIE Signal and Data Processing of Small Targets, vol. 1305, p. 434.

- Runnalls, A. R. (2007). Kullback-Leibler approach to Gaussian mixture reduction. IEEE Transactions on Aerospace and Electronic Systems, 43(3), 989–999.

- Davis, J. V. and Dhillon, I. S. (2007). Differential Entropic Clustering of Multivariate Gaussians. Advances in Neural Information Processing Systems 19, pp. 337–344.

- Assa, A. and Plataniotis, K. N. (2018). Wasserstein-distance-based Gaussian mixture reduction. IEEE Signal Processing Letters, 25(10), 1465–1469.

- Yu, L., Yang, T., and Chan, A. B. (2019). Density-Preserving Hierarchical EM Algorithm: Simplifying Gaussian Mixture Models for Approximate Inference. IEEE Transactions on Pattern Analysis and Machine Intelligence, 41(6), 1323–1337.

- Williams, J. L. and Maybeck, P. S. (2006). Cost-function-based hypothesis control techniques for multiple hypothesis tracking. Mathematical and Computer Modelling, 43(9–10), 976–989.

- Huber, M. F. and Hanebeck, U. D. (2008). Progressive Gaussian mixture reduction. 11th International Conference on Information Fusion, pp. 1–8. IEEE.

- Zhang, Q., Zhang, A. G., and Chen, J. (2024). Gaussian Mixture Reduction With Composite Transportation Divergence. IEEE Transactions on Information Theory, 70(7), 5191–5212.

- Zhang, Q. and Chen, J. (2022). Distributed Learning of Finite Gaussian Mixtures. Journal of Machine Learning Research, 23(1), 4265–4304.

- Zhang, Q., Tan, Y. S., and Chen, J. (2026). Byzantine-Tolerant Distributed Learning of Finite Mixture Models. Journal of the Royal Statistical Society: Series B, qkag065.

- Kerbl, B., Kopanas, G., Leimkühler, T., and Drettakis, G. (2023). 3D Gaussian Splatting for Real-Time Radiance Field Rendering. ACM Transactions on Graphics, 42(4), 1–14.

- Fan, Z. et al. (2024). LightGaussian: Unbounded 3D Gaussian compression with 15× reduction and 200+ FPS. Advances in Neural Information Processing Systems, vol. 37, pp. 140138–140158.

- Fang, G. and Wang, B. (2024). Mini-Splatting: Representing scenes with a constrained number of Gaussians. European Conference on Computer Vision (ECCV 2024), pp. 165–181. Springer.

- Niemeyer, M. et al. (2024). RadSplat: Radiance field-informed Gaussian splatting for robust real-time rendering with 900+ FPS. arXiv:2403.13806.

- Liu, R., Xu, R., Hu, Y., Chen, M., and Feng, A. (2024). AtomGS: Atomizing Gaussian splatting for high-fidelity radiance field. arXiv:2405.12369.

- Ali, M. S. et al. (2024). Trimming the fat: Efficient compression of 3D Gaussian splats through pruning. arXiv:2406.18214.

- Hanson, A. et al. (2024). PUP 3D-GS: Principled uncertainty pruning for 3D Gaussian splatting. arXiv:2406.10219.

- Zhang, Z. et al. (2024). LP-3DGS: Learning to prune 3D Gaussian splatting. Advances in Neural Information Processing Systems, vol. 38.

- Lee, J. C., Rho, D., Sun, X., Ko, J. H., and Park, E. (2024). Compact 3D Gaussian representation for radiance field. IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2024), pp. 21719–21728.

- Chen, Y. et al. (2024). HAC: Hash-grid assisted context for 3D Gaussian splatting compression. European Conference on Computer Vision (ECCV 2024), pp. 422–438. Springer.

- Matsuki, H., Murai, R., Kelly, P. H. J., and Davison, A. J. (2024). Gaussian Splatting SLAM. IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR 2024), pp. 18039–18048.

- Ji, Y. et al. (2024). NEDS-SLAM: A neural explicit dense semantic SLAM framework using 3D Gaussian splatting. IEEE Robotics and Automation Letters, 9(10), 8778–8785.

- Wang, T., Li, M., Zeng, G., Meng, C., and Zhang, Q. (2025). Gaussian Herding across Pens: An Optimal Transport Perspective on Global Gaussian Reduction for 3DGS. NeurIPS 2025. OpenReview