Overview

Empirical likelihood (EL) [1] is a classical nonparametric framework that enables likelihood-based inference — confidence intervals, likelihood ratio tests, etc. — without specifying parametric assumptions on the data-generating process. This framework was later reinterpreted as a way to integrate data across heterogeneous populations via density ratio modeling [2–7]. Specifically, instead of estimating each population’s distribution separately, a baseline distribution is modeled nonparametrically and related to that of each population via multiplicative tilts.

While much of the classical literature on EL has focused on traditional problems such as goodness-of-fit testing or capture–recapture analysis, my research modernizes EL for AI and machine learning, leveraging neural networks to enrich density ratio modeling while conversely using EL to inspire new algorithmic paradigms.

Application 1: Reimagining FL — From aggregation to guidance

In federated learning (FL), multiple clients — such as hospitals — collaboratively train models without sharing raw data. Existing FL methods primarily focus on aggregating updates from clients into a single global model, but this often underutilizes the rich heterogeneity present across sites. For instance, hospitals may specialize in different patient subgroups, suggesting that client diversity should be leveraged rather than suppressed.

We use EL to reimagine FL, shifting the paradigm from aggregation to guidance [8]. Focusing on multi-class classification, we formulate a density ratio model to allow for constrained heterogeneity across classes and clients. After profiling out the nonparametric baseline, the resulting loss function decomposes naturally into two cross-entropy terms:

- one that encourages accuracy for predicting the class label of each individual, and

- another for identifying which client they originate from.

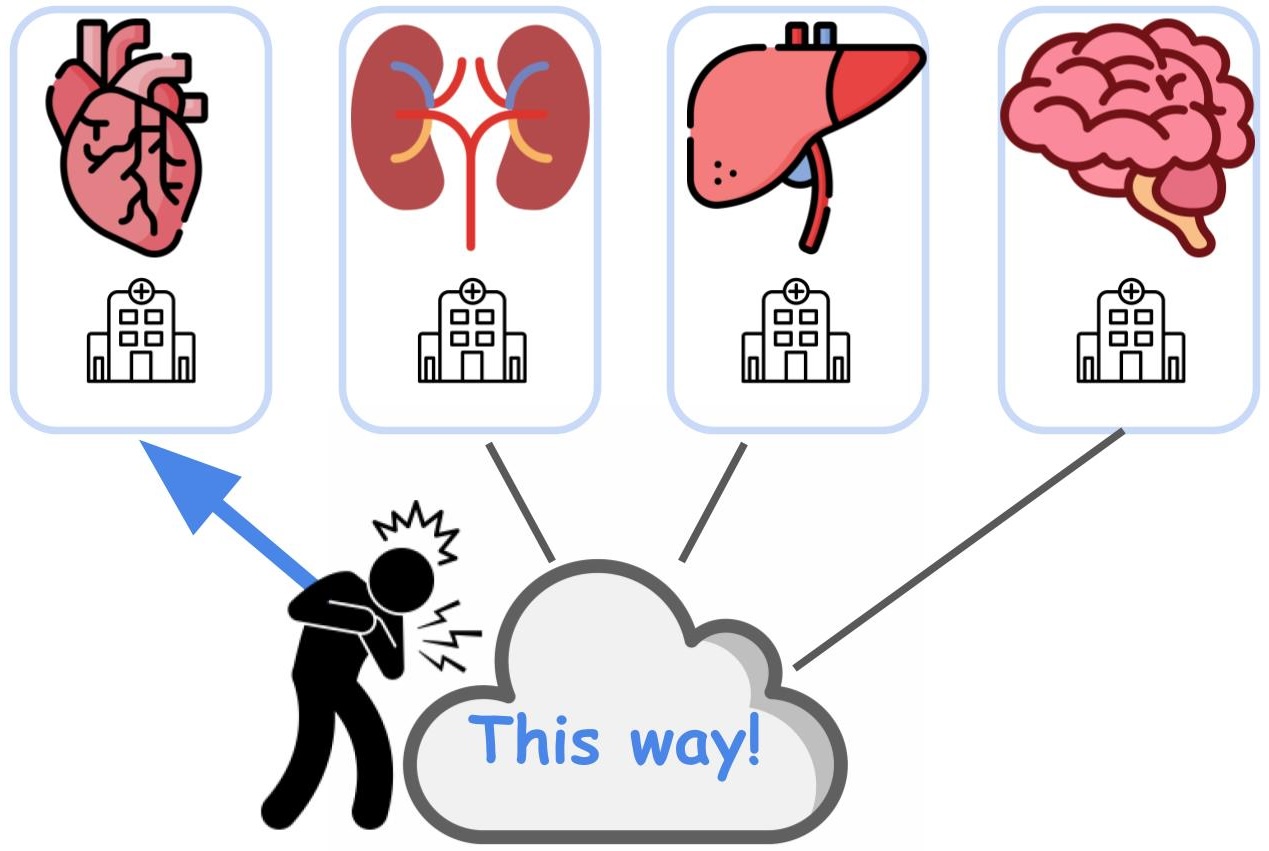

We formulate an efficient algorithm to optimize this loss function while respecting FL data-sharing constraints. Beyond more efficient data pooling, our EL approach proposes a paradigm shift: the central server acts not only as an aggregator but as an intelligent router, directing new tasks or queries to the client most specialized for them (Figure 1). This new perspective transforms client diversity from a challenge into an asset.

Figure 1: Cartoon illustration. The FL server as an intelligent router: leveraging learned data distributions to direct queries to the most specialized client, rather than applying a global model.

Key paper: Wang, Z., Zhang, X., Zhang, X., Liu, Y., and Zhang, Q. (2026). Beyond Aggregation: Guiding Clients in Heterogeneous Federated Learning. ICLR 2026.Paper link

Application 2: Learning from noisy labels

Label noise is a pervasive challenge in real-world machine learning. Datasets often contain annotation mistakes, disagreement between annotators, or ambiguity in label definitions. Even widely used benchmarks are not immune, and in specialized domains such as healthcare, label uncertainty is often unavoidable. Studies estimate that roughly 5% of labels are incorrect [9], with additional evidence of noise in datasets like MNIST and ImageNet [10], and in CheXpert, where radiology reports can lead to systematic mislabeling [11]. Ignoring such noise causes models to fit corrupted labels rather than recover the underlying signal.

This issue becomes more critical in high-stakes settings, where different types of errors carry very different consequences. For example, failing to detect a serious disease is far more costly than a false alarm, and approving a fraudulent transaction is riskier than rejecting a legitimate one. In such settings, the goal is not just high overall accuracy, but reliable control of specific types of errors.

We use empirical likelihood (EL) to develop a principled framework for learning under label noise in multiclass classification. Rather than treating noisy labels as a preprocessing problem, we explicitly model how noisy labels relate to the underlying true labels.

The key insight is twofold:

- The feature distributions across classes are naturally linked through a density ratio model.

- The distribution of features given a noisy label can be expressed as a convex combination of the clean class-conditional distributions.

These observations allow all relevant distributions to be represented as parametric tilts of a common reference distribution. Leveraging EL, we estimate this reference distribution nonparametrically and recover the tilting parameters through profiling—yielding estimators that target the underlying clean-label quantities.

A central challenge in this problem is that, without additional structure, learning from noisy labels is fundamentally non-identifiable: different underlying truths can produce the same observed data. Our work shows that the density ratio structure resolves this issue. Under mild conditions, it allows us to uniquely recover the key quantities needed for learning—without requiring prior knowledge of the noise mechanism. To our knowledge, this type of identifiability result is new in this setting.

Building on this, we develop an EL-based estimation procedure that is both statistically principled and computationally practical. The method achieves standard statistical guarantees (consistency and asymptotic normality), and can be efficiently implemented via an EM algorithm, with a simple weighted logistic regression step. We then integrate these estimates into the Neyman–Pearson multiclass classification (NPMC) framework [12], which minimizes weighted misclassification risk subject to constraints on class-specific error rates. Such guarantees are essential in applications where certain mistakes must be strictly controlled.

When label noise is ignored, NPMC can become overly conservative, leading to inflated errors elsewhere. By contrast, our EL-based approach achieves robust and theoretically grounded control of class-specific errors, even in the presence of corrupted labels—and provides, to our knowledge, the first such guarantees in the multiclass setting without assuming knowledge of the noise process.

This line of work reflects a broader goal: using empirical likelihood to bring statistical rigor and interpretability to modern machine learning problems involving imperfect data.

Key paper: Zhang, Q., Tian, Q., and Li, P. (2025). Neyman–Pearson Multiclass Classification under Label Noise. arXiv preprint. Paper link

References

- Owen, A. B. (2001). Empirical Likelihood. Chapman and Hall/CRC.

- Qin, J. and Zhang, B. (1997). A goodness-of-fit test for logistic regression models based on case-control data. Biometrika, 84(3), 609–618.

- Fokianos, K., Kedem, B., Qin, J., and Short, D. A. (2001). A semiparametric approach to the one-way layout. Technometrics, 43(1), 56–65.

- Chen, J. and Liu, Y. (2013). Quantile and quantile-function estimations under density ratio model. The Annals of Statistics, 41(3), 1669–1692.

- Li, P., Liu, Y., and Qin, J. (2017). Semiparametric inference in a genetic mixture model. Journal of the American Statistical Association, 112(519), 1250–1260.

- Liu, Y., Li, P., and Qin, J. (2017). Maximum empirical likelihood estimation for abundance in a closed population from capture-recapture data. Biometrika, 104(3), 527–543.

- Liu, S. et al. (2025). Positive and Unlabeled Data: Model, Estimation, Inference, and Classification. Journal of the American Statistical Association, pp. 1–12.

- Wang, Z., Zhang, X., Zhang, X., Liu, Y., and Zhang, Q. (2026). Beyond Aggregation: Guiding Clients in Heterogeneous Federated Learning. ICLR 2026.

- Yao, S., Rava, B., Tong, X., and James, G. (2023). Asymmetric error control under imperfect supervision: a label-noise-adjusted Neyman–Pearson umbrella algorithm. Journal of the American Statistical Association, 118(543), 1824–1836.

- Northcutt, C., Jiang, L., and Chuang, I. (2021). Confident learning: Estimating uncertainty in dataset labels. Journal of Artificial Intelligence Research, 70, 1373–1411.

- Irvin, J. et al. (2019). CheXpert: A large chest radiograph dataset with uncertainty labels and expert comparison. AAAI 2019, 33(01), 590–597.

- Tian, Y. and Feng, Y. (2025). Neyman-Pearson Multi-class Classification via Cost-sensitive Learning. Journal of the American Statistical Association, 120(550), 1164–1177.